Posts

1645Following

139Followers

90I'm currently working on my second novel which is complete, but is in the edit stage. I wrote my first novel over 20 years ago but then didn't write much till now.

I post about #Coding, #Flutter, #Writing, #Movies and #TV. I'll also talk about #Technology, #Gadgets, #MachineLearning, #DeepLearning and a few other things as the fancy strikes ...

Lived in: 🇱🇰🇸🇦🇺🇸🇳🇿🇸🇬🇲🇾🇦🇪🇫🇷🇪🇸🇵🇹🇶🇦🇨🇦

Fahim Farook

f

As is happening everywhere, apparently Draft2Digital too has decided that authors are the cash cow and have started charging fees 😕

I've pulled all my books from there till I find a replacement.

https://draft2digital.com/blog/understanding-d2ds-activation-and-maintenance-fees/

#writing #indieauthor #gouging

I've pulled all my books from there till I find a replacement.

https://draft2digital.com/blog/understanding-d2ds-activation-and-maintenance-fees/

#writing #indieauthor #gouging

Fahim Farook

f

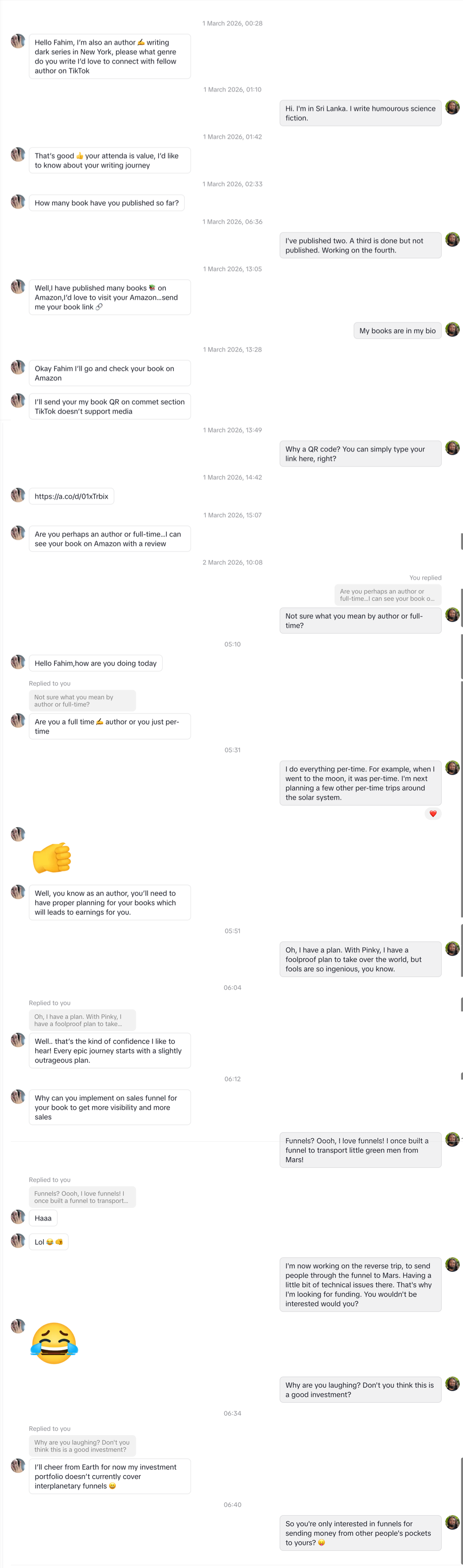

When a "fellow author" from New York (who strangely can't send a simple web link) tries to pitch you on "marketing services," there is only one logical response: In-character sci-fi nonsense.

I spent this morning explaining my interplanetary transportation funnels to a scammer who just wanted to talk about "sales funnels." Apparently, their investment portfolio doesn't cover Martian people transport yet — just transporting money from the pockets of unsuspecting writers 😛

Friendly reminder to my fellow writers: if someone slides into your DMs to "connect" and immediately pivots to your marketing strategy or asks for money, they aren’t your peer — they’re a bot or a grifter. Keep your wallet closed, and your sense of humor open!

And always have some fun with them. Who knows, it might get that creativity juice flowing 🙂

#ScamBaiting #AuthorCommunity #WritingTips #ScamAlert #SciFiWriter #MarketingScams #FunnelsFromMars

A long, vertical screenshot of …

I spent this morning explaining my interplanetary transportation funnels to a scammer who just wanted to talk about "sales funnels." Apparently, their investment portfolio doesn't cover Martian people transport yet — just transporting money from the pockets of unsuspecting writers 😛

Friendly reminder to my fellow writers: if someone slides into your DMs to "connect" and immediately pivots to your marketing strategy or asks for money, they aren’t your peer — they’re a bot or a grifter. Keep your wallet closed, and your sense of humor open!

And always have some fun with them. Who knows, it might get that creativity juice flowing 🙂

#ScamBaiting #AuthorCommunity #WritingTips #ScamAlert #SciFiWriter #MarketingScams #FunnelsFromMars

A long, vertical screenshot of …

Fahim Farook

f

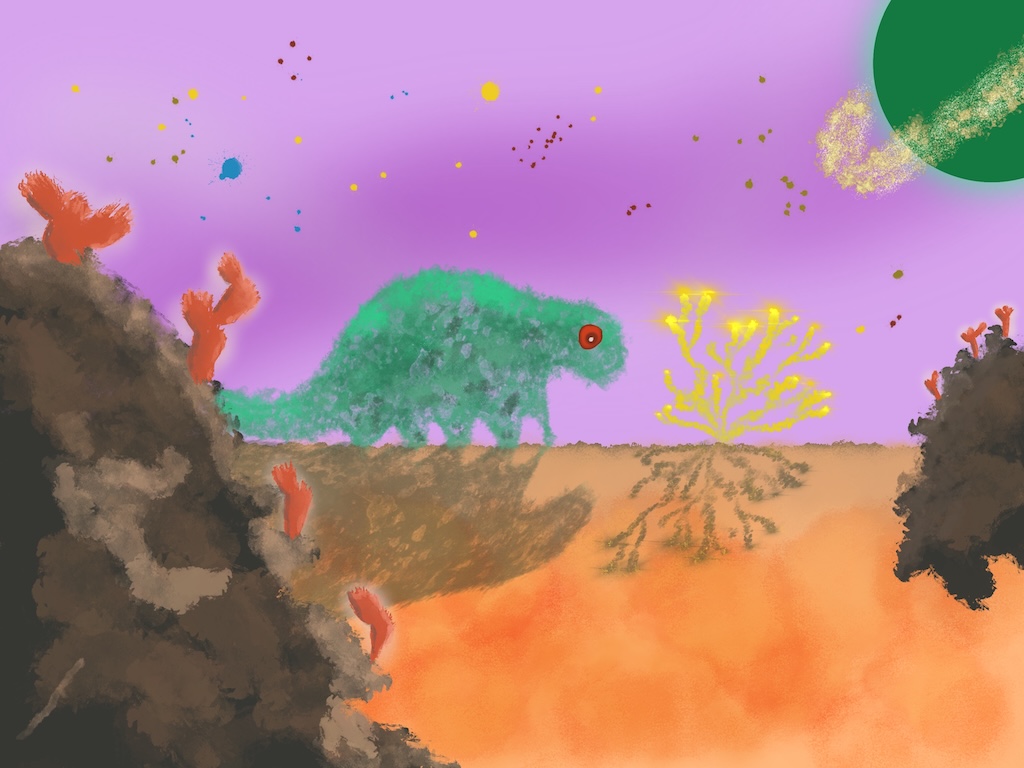

Instead of the usual "Animal Antics" paintings, I did something different this week — I call it "Alien Dreams" 🙂

#Art #Painting

A painting of an alien landscap…

#Art #Painting

A painting of an alien landscap…

Fahim Farook

f

Continuing on with my "Animal Antics" series, here's "Doggerel Writer" 🙂

I'm happier with this one than I was with the previous paintings since I see fewer issues, but I still feel there's room for improvement.

#Art #Painting #AnimalAntics

A painting of a furry dog stand…

I'm happier with this one than I was with the previous paintings since I see fewer issues, but I still feel there's room for improvement.

#Art #Painting #AnimalAntics

A painting of a furry dog stand…

Fahim Farook

f

To go along with the previous painting titled "Mouseketeer", I've created a new one called "Catechist" 🙂

In fact, I'm thinking of doing a whole series of these ... Will I get there? Or will my Apple Pencil break? Tune in next time to find out 😛

#Art #Painting #AnimalAntics

A painting of a tall building w…

In fact, I'm thinking of doing a whole series of these ... Will I get there? Or will my Apple Pencil break? Tune in next time to find out 😛

#Art #Painting #AnimalAntics

A painting of a tall building w…

Fahim Farook

f

I've been slowly building an ecosystem of apps - an issue tracker for bugs and things to do, an app to send new app builds to my android/iOS devices like AirDrop (for Android) which auto installs apps, an ebook reader which actually works etc.

Today, I had it all work together beautifylly. As Hannibal would say, I love it when a plan comes togethe 🙂

Now I can put aside the coding (mostly) and work on my art 🙂

#Planning #Dev #Art

Today, I had it all work together beautifylly. As Hannibal would say, I love it when a plan comes togethe 🙂

Now I can put aside the coding (mostly) and work on my art 🙂

#Planning #Dev #Art

Fahim Farook

f

This is an image which has been rattling around in my head for a few days now. I call it "Mouseketeer" 😛

#Art #Ideas #Painting

A painting of a mouse wearing a…

#Art #Ideas #Painting

A painting of a mouse wearing a…

Fahim Farook

f

An icon for an app that I'm trying out. Not quite sure if it works or not, but the first bit of drawing I've done in a fair bit ...

#Art #Icon #Ideas

An image with a yellow backgrou…

#Art #Icon #Ideas

An image with a yellow backgrou…

Fahim Farook

f

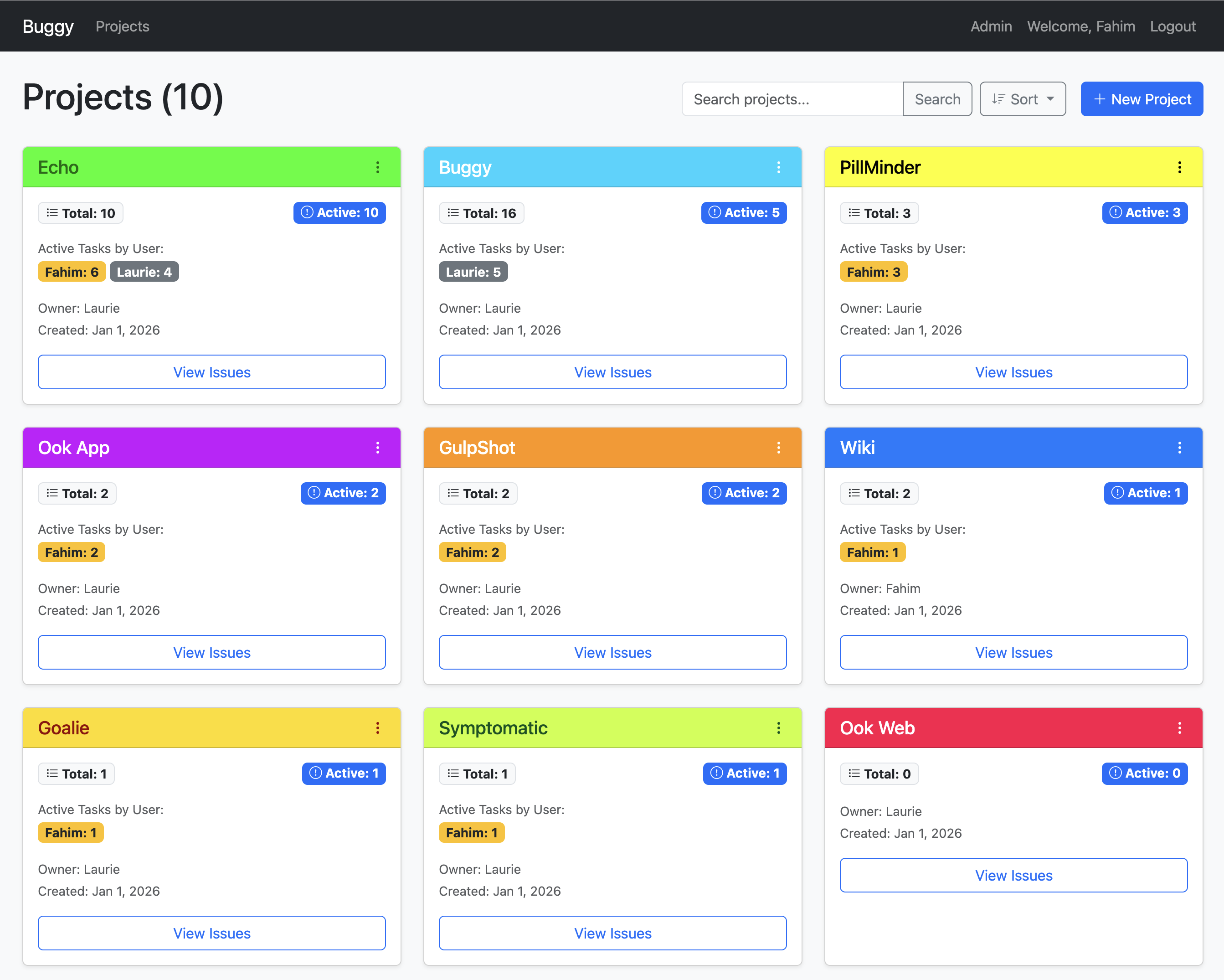

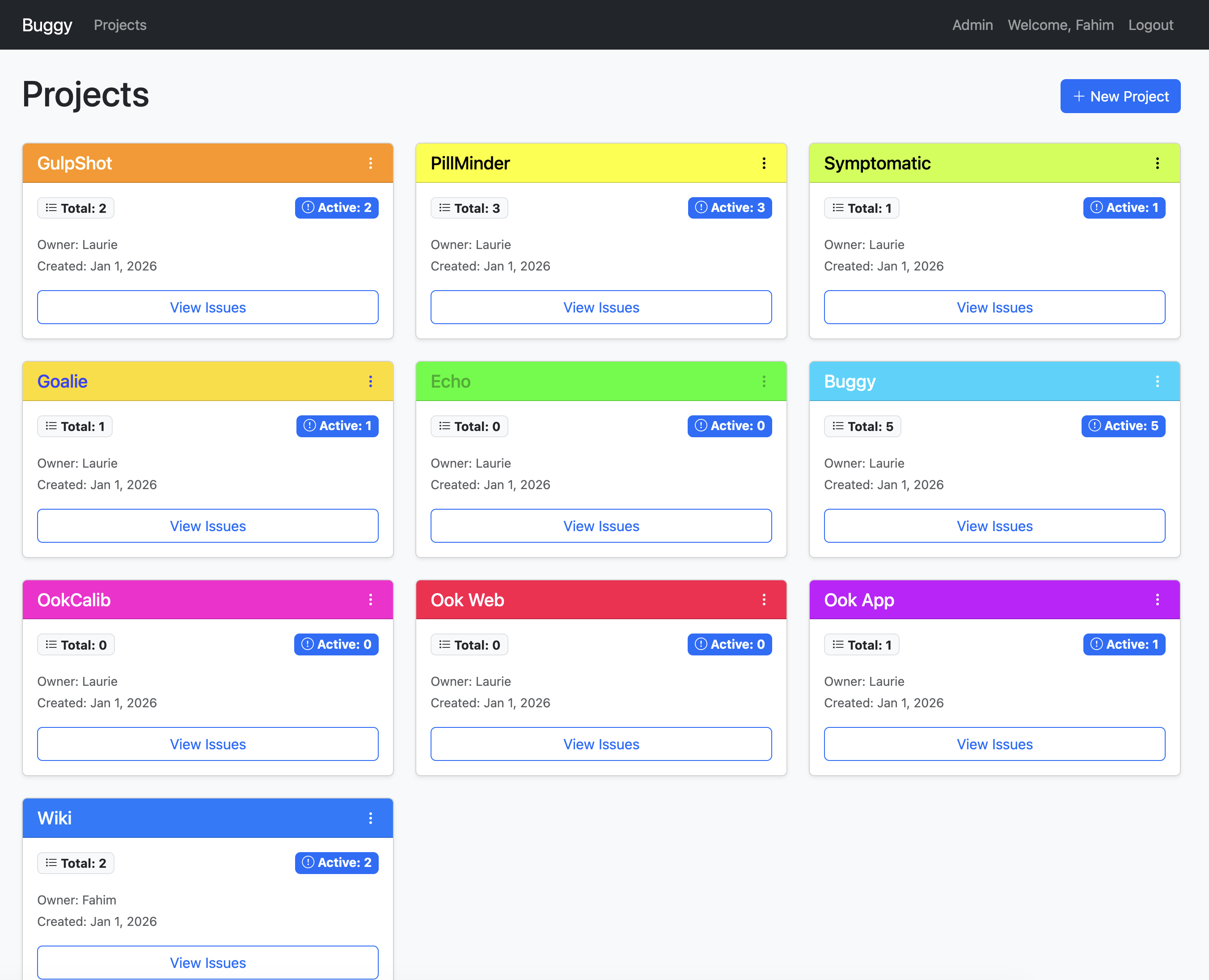

Created a simple, lightweight, and easy to install/deploy issue tracker yesterday for our presonal use. We like it so much that I've decided to release it as an open source project:

https://github.com/FahimF/Buggy

Let me know if you've any feedback 🙂

#OpenSource #BugTracking #IssueTracking

A web-based bug tracker showing…

https://github.com/FahimF/Buggy

Let me know if you've any feedback 🙂

#OpenSource #BugTracking #IssueTracking

A web-based bug tracker showing…

Fahim Farook

f

Started the year by creating a quick and lightweight bug tracker 🙂 Been using multiple solutions, but none of them really worked for us. This one is customized to meet our particular requirements.

Within an hour of creating it, the wife has already added a bunch of projects - and that's not even half of the projects that I work on for our personal use 😛

#Tools #Development #Web

A web-based bug tracker showing…

Within an hour of creating it, the wife has already added a bunch of projects - and that's not even half of the projects that I work on for our personal use 😛

#Tools #Development #Web

A web-based bug tracker showing…

Fahim Farook

f

"Now You See Me: Now You Don't" (https://www.imdb.com/title/tt4712810/) was disappointing.

It telegraphed what was coming way too early - if you didn't foresee the major reveal at the end way before you got there, you weren't paying attention 😛 (And I don't blame you for not paying attention ... it wasn't that interesting.)

And the sequence in the middle with them trying to outdo each other doing tricks? Annoying! And pointless.

#Movies #English

It telegraphed what was coming way too early - if you didn't foresee the major reveal at the end way before you got there, you weren't paying attention 😛 (And I don't blame you for not paying attention ... it wasn't that interesting.)

And the sequence in the middle with them trying to outdo each other doing tricks? Annoying! And pointless.

#Movies #English

Fahim Farook

f

"The Running Man" (https://www.imdb.com/title/tt14107334) seems hyper-violent (like a lot of stuff these days) but on the other hand, it managed to keep my attention to the end. So probably one of the better action movies in the past couple of years?

https://www.imdb.com/title/tt14107334

#Movies #English #Action #SciFi

https://www.imdb.com/title/tt14107334

#Movies #English #Action #SciFi

Fahim Farook

f

"Aaromaley" (https://www.imdb.com/title/tt36587142) had a slightly slow start but it picks up quickly and really comes in to its own after the interval. It's a beautiful movie about people, relationships, and feelings. And to suddenly hear SPB singing "Anjali" - a feeling all in its own category ...

A beautiful, emotional, and heart-warming romance that's well worth watching.

#Movies #Tamil #Romance

A beautiful, emotional, and heart-warming romance that's well worth watching.

#Movies #Tamil #Romance

Fahim Farook

f

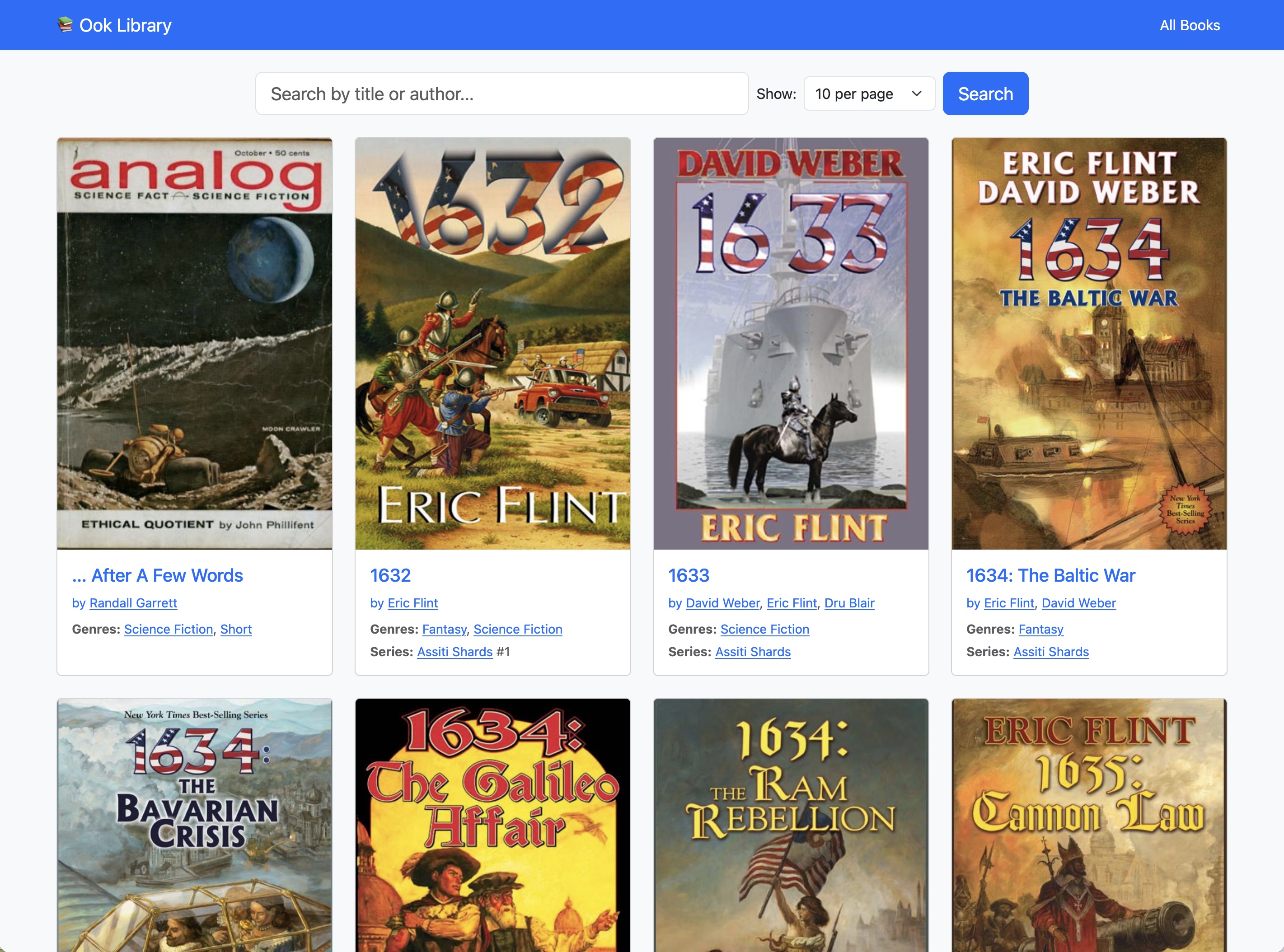

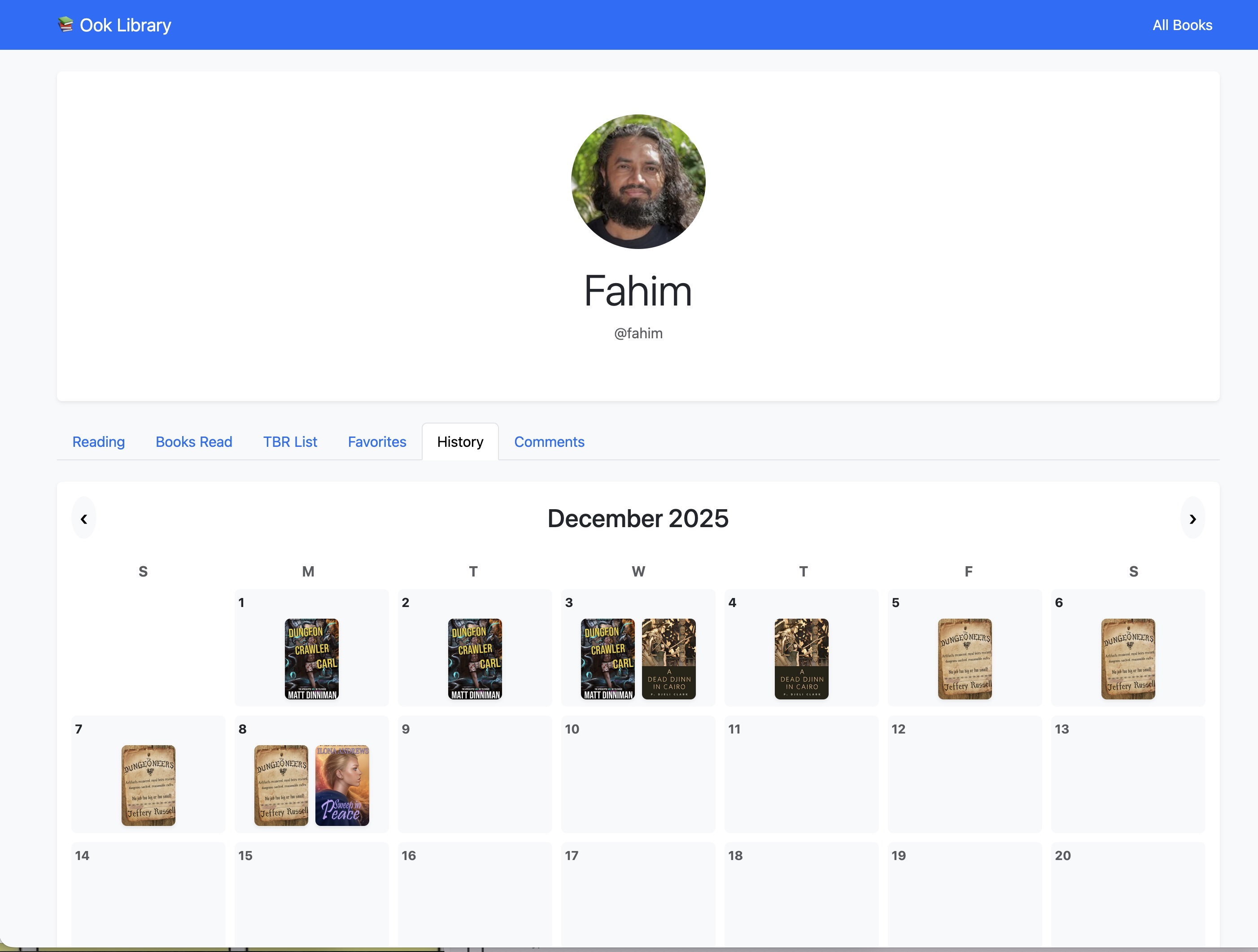

I've been coding a web-based library interface to complement my book reading app.

The library simply shows our books and allows the wife and I to have separate user profiles showing the books we're reading, our reading history, and comments.

Working pretty good.

#Reading #Books #Coding #Library

A web-based library interface s…

A web-based user profile with t…

The library simply shows our books and allows the wife and I to have separate user profiles showing the books we're reading, our reading history, and comments.

Working pretty good.

#Reading #Books #Coding #Library

A web-based library interface s…

A web-based user profile with t…

Fahim Farook

f

I keep hearing how Firebase has "generous" usage on the free plan. I started working on a library app with 4000+ books. Two data syncs and I was through my free plan 😛

Switched over to a local server with SQLite immediately. Might look at Parse again maybe?

#Coding #Platforms

Switched over to a local server with SQLite immediately. Might look at Parse again maybe?

#Coding #Platforms

Fahim Farook

f

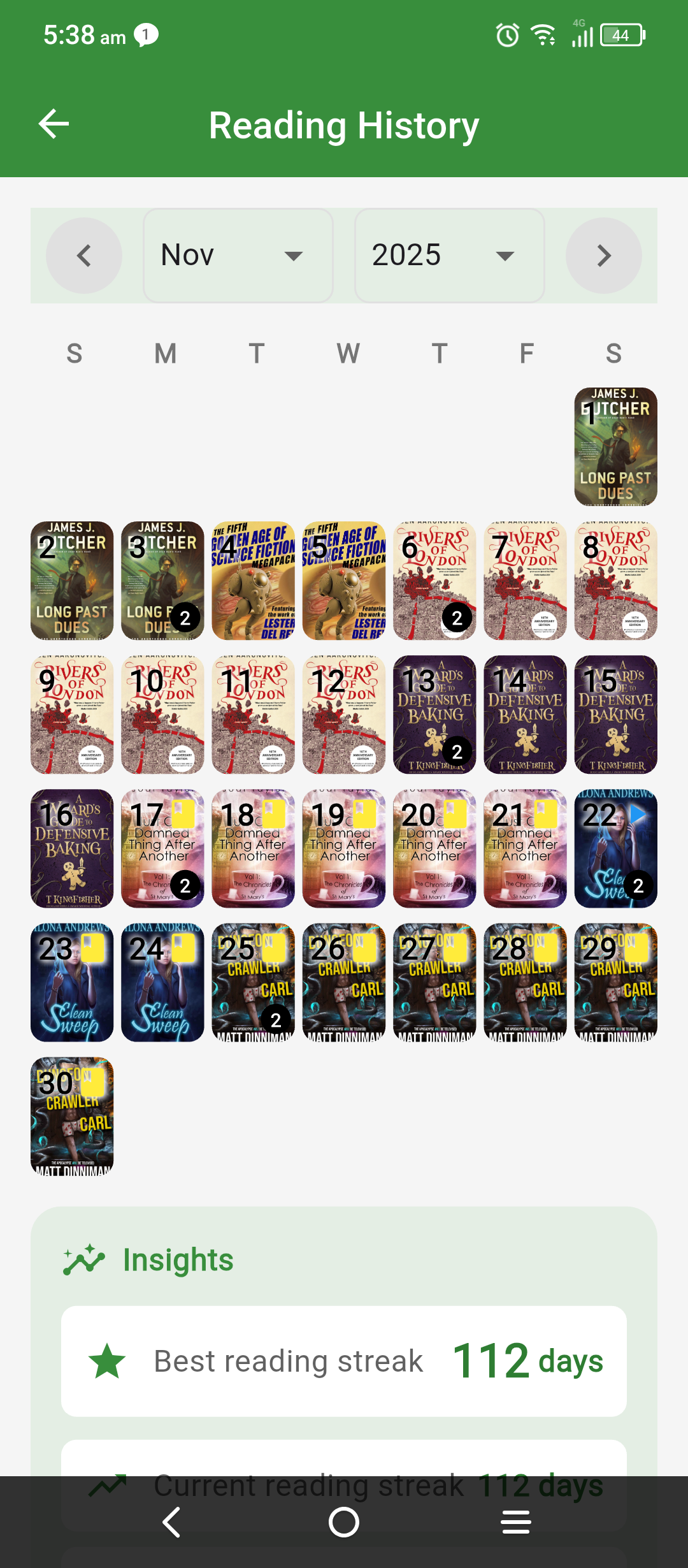

What with the storm and flooding in Sri Lanka and other stuff going on, I haven't had time to do a reading update. But maybe time to do one now to close out November?

After DNFing "Just One Damned Thing After Another", I started on "Clean Sweep". This one I liked 🙂

The story was engaging, and the writing was clean. It wasn't the kind of writing that made me want to write like that, but the story was good. And I finished the book in 4 days, which is a record for me ... Or I wasn't busy 😛

I'll be returning to this series!

Then I read "Dungeon Crawler Carl".

I knew Matt Dinniman a long time ago (before DCC) and he's a great guy. The book was interesting.

What interests me mostly about a book is the plot. Figuring out what will happen before it happens.

Couldn't do that much here 😛

For one thing, you know what will happen ultimately based on the premise - you know that Carl will make it to the end of the dungeon 🙂

For another, the plot points are like mini-stories. Each section of a level takes a chapter or two and is done. There are very few overarching plot elements which act as hooks. The main attraction is to keep reading to see what happens next.

But the plot twists as he progressed were entertaining and creative. So I kept reading, and reading, and reading.

Again, not quite the kind of thing I'd want to write myself, but definitely something that will keep me reading!

I'll be coming back to this series. Both Ilona Andrews and Matt make me want to come back and finish the whole series. But there are so many books ....

#Reading #Books #MiniReviews

A screenshot from a reading app…

After DNFing "Just One Damned Thing After Another", I started on "Clean Sweep". This one I liked 🙂

The story was engaging, and the writing was clean. It wasn't the kind of writing that made me want to write like that, but the story was good. And I finished the book in 4 days, which is a record for me ... Or I wasn't busy 😛

I'll be returning to this series!

Then I read "Dungeon Crawler Carl".

I knew Matt Dinniman a long time ago (before DCC) and he's a great guy. The book was interesting.

What interests me mostly about a book is the plot. Figuring out what will happen before it happens.

Couldn't do that much here 😛

For one thing, you know what will happen ultimately based on the premise - you know that Carl will make it to the end of the dungeon 🙂

For another, the plot points are like mini-stories. Each section of a level takes a chapter or two and is done. There are very few overarching plot elements which act as hooks. The main attraction is to keep reading to see what happens next.

But the plot twists as he progressed were entertaining and creative. So I kept reading, and reading, and reading.

Again, not quite the kind of thing I'd want to write myself, but definitely something that will keep me reading!

I'll be coming back to this series. Both Ilona Andrews and Matt make me want to come back and finish the whole series. But there are so many books ....

#Reading #Books #MiniReviews

A screenshot from a reading app…

Fahim Farook

f

"Sunny Sanskari Ki Tulsi Kumari" (https://www.imdb.com/title/tt30742355) was a fun movie! Didn't expect too much from it but as is usual is the case when I don't expect much from Varun Dhawan, he surprises you 😛

Another great Bollywood romance!

#Movies #Hindi #Bollywood

Another great Bollywood romance!

#Movies #Hindi #Bollywood

Fahim Farook

f

I have a lot of notes scattered across multiple apps - OneNote, Affine, Apple Notes, Obsidian etc. So I decided to combine all of them in one place 🙂

Getting everything into Obsidian was easy enough since Obsidian has a bunch of useful importers. So that was done, but then I needed to be able to sync the notes across the local network to other machines and with my wife.

Sure, Obsidian has their sync functionality but that's a paid subscription. Then there is at least one open-source sync solution but it seemed a bit heavy for my needs. So, as one does when one is a developer, I decided to write my own 😛

I wanted something which only syncs over the local network, is easy to deploy and install and is light-weight. I think have all those boxes ticked. It's a tiny Go server for the server-side and an Obsidian plugin for handling the client side.

And in case anybody is interested in using it (or testing it, or contributing to it), I decided to make it open-source. You can find it on GitHub here:

https://github.com/FahimF/GoSync

#Obsidian #Sync #Go #OpenSource

Getting everything into Obsidian was easy enough since Obsidian has a bunch of useful importers. So that was done, but then I needed to be able to sync the notes across the local network to other machines and with my wife.

Sure, Obsidian has their sync functionality but that's a paid subscription. Then there is at least one open-source sync solution but it seemed a bit heavy for my needs. So, as one does when one is a developer, I decided to write my own 😛

I wanted something which only syncs over the local network, is easy to deploy and install and is light-weight. I think have all those boxes ticked. It's a tiny Go server for the server-side and an Obsidian plugin for handling the client side.

And in case anybody is interested in using it (or testing it, or contributing to it), I decided to make it open-source. You can find it on GitHub here:

https://github.com/FahimF/GoSync

#Obsidian #Sync #Go #OpenSource

Fahim Farook

f

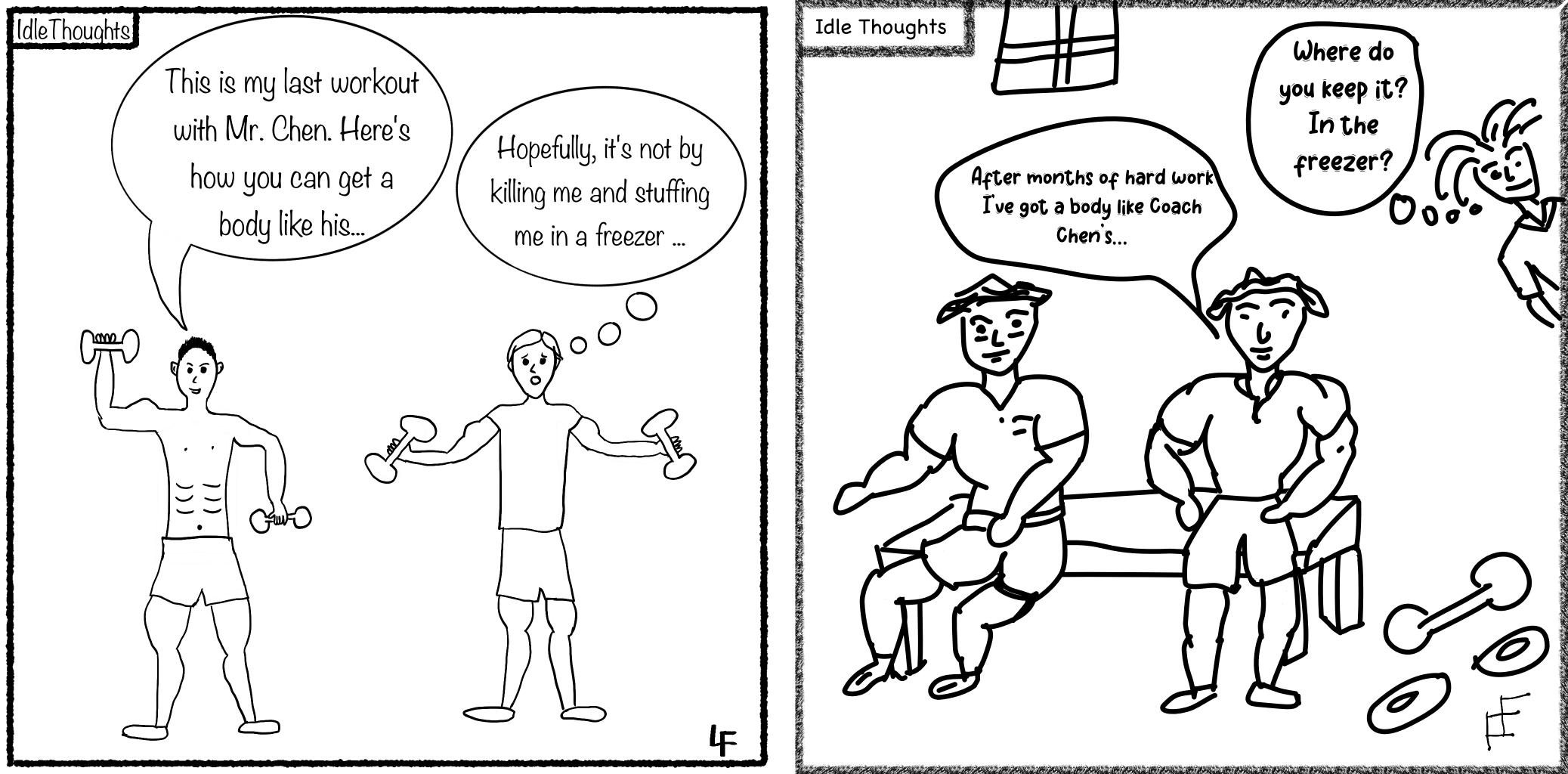

So the wife and I did our cartoons for this week based on a Facebook video ... or at least, my/our reaction to it 😛

https://write.farook.org/idle-thoughts-05/

#Cartoon #Drawing #Art

Two cartoons by different peopl…

https://write.farook.org/idle-thoughts-05/

#Cartoon #Drawing #Art

Two cartoons by different peopl…